Research Article

Meta-Analysis and Quality of Behavioral Interventions to Treat Stereotypy in Children and Adolescents

John W. Maag *

Department of Special Education and Communication Disorders, Barkley Memorial Center, University of Nebraska-Lincoln, Lincoln, USA

*Corresponding author: John W. Maag, Department of Special Education and Communication Disorders, Barkley Memorial Center, University of Nebraska-Lincoln, Lincoln, USA. E-mail: jmaag1@unl.edu

Citation: John MW (2020) Meta-Analysis and Quality of Behavioral Interventions to Treat Stereotypy in Children and Adolescents. J Psychiatry Behav Ther 2020: 01-36

Received Date: 11 Febrauary 2020; Accepted Date: 23 February 2020; Published Date: 27 Febrauary 2020

Abstract

Stereotypy is considered several response classes of behavior that lack variability and a clear social function that can be categorized as either lower order (e.g., hand flapping, body rocking) or higher order (e.g., echolalia, need for sameness). There have been several reviews of this literature, although only four have been conducted since quality indicators for single case research designs (SCRDs) were published in 2005. However, none of reviews addressed all the PRISMA guidelines, especially accounting for publication bias. Further, no reviews focused solely on studies conducted in only school settings. This variable is important to address in terms of implications for educators. Therefore, the purpose of the present meta-analysis was to extend results from previous reviews in a more systematic fashion, account for publication bias, and focus on school settings. In addition, study quality was assessed the Council for Exceptional Children’s (CEC) eight quality indicators on the 15 obtained studies. Results from standard mean difference (SMD), improvement rate difference (IRD) and Tau-U effect size calculations indicated interventions effectiveness ranged from small to moderate.

Keywords: Tau-U, Behavioral Interventions, PRISMA

Introdution

Meta-Analysis and Quality of Behavioral Interventions to Treat Stereotypy in Children and Adolescents

Stereotypy consists of several response classes that lack variability and distinct social function. Early researchers believed that it was totally non-functional and had no apparent antecedent stimuli (e.g., [1]). However, Newel, Incledon, Bodfish, and Sprague found that the extent of variability may differ across response classes and some uncertainty about how many episodes of behavior are needed to constitute invariance [2]. There are some neurobiological systems that influence stereotypy and pharmacological interventions, but the operant theory is the dominant paradigm to explain its occurrence and that stereotypy is maintained or reinforced by consequences that follow the behavior. Further, researchers using functional analyses have long determined that stereotypy is maintained by positive, negative, and sensory reinforcement—or some combination of the three [3]. Hanley, Iwata, and McCord found that 61% of stereotypy is maintained by automatic reinforcement [4] (i.e., operant mechanisms independent of the social environment). Lydon, Moran, Healy, Mulhern, and Enright-Young described two forms of stereotypy: lower order and higher order [5]. Lower order stereotypy consists of repetitive motor movement behaviors such as hand flapping, body rocking, tapping, and adherence to sameness. Higher order behaviors such as echolalia, need for sameness, and performing rituals that follow rigid sets of mental rules.

Stereotypy is characteristic of many individuals with autism spectrum disorder (ASD), developmental disabilities, and intellectual disabilities; although it is more common and severe with regards to ASD [5,6]. It is also exhibited by individuals with normal development. However, individuals with autism and developmental disabilities display stereotypy across diverse stimuli contexts, in combination with multiple repetitive response classes, and in a fashion that is prominent to others in the environment [7]. Rapp and Vollmer also noted that apparent insensitivity of some behaviors to possible opposing social variables appears to be a salient attribute for differentiating between behaviors that are only repetitive and those that are stereotypy.

Self-stimulatory behaviors generally pose no threat to the child or other persons in the environment. However, they may interfere with the occurrence of appropriate play, learning, and acquiring new behaviors. Prior to the 1980s stereotypy was often treated with some for of punishment such as, but not limited to, time-out, physical exercise, slaps, electric shock, and overcorrection [8]. Around the end of the 1970s Rincover used sensory extinction to suppress stereotypy [9]. This procedure involved identifying the controlling sensory modality maintaining a self-stimulatory behavior and removing that source of reinforcement. For example, if a child repeatedly flicked a light switch because the clicking sound made was reinforcing, then the switch would be replaced with one that was noiseless. Since then researchers have successfully treated stereotypy with such interventions as differential reinforcement techniques, noncontingent reinforcement, environmental enrichment, competing stimuli, and response blocking [10].

A major problem using punishment techniques such as overcorrection, and the procedure of response blocking (i.e., physically stopping a self-stimulatory behavior as a child begins to emit it) is that they result in negative collateral behaviors such as aggression [11-13]. This negative side effect, along with the emphasis of using less restrictive non-aversive interventions, resulted in research on the use of response redirection to treat stereotypy [14]. This approach involved the delivery of prompts for children to engage in an alternative response each time they emit the target behavior. For example, children who engaged in vocal stereotypy (i.e., echolalia) would be required to verbally answer questions or imitate appropriate vocalizations until they met a goal of three consecutive correct responses without engaging in the target behavior [15].

There have been several systematic reviews of techniques to treat stereotypy during the past two decades. However, only four have been conducted since quality indicators for single case research designs (SCRDs) were published by Horner and his colleagues in 2005 [16]. In that same year, Rapp and Vollmer reviewed issues related to stereotypy definitions, behavioral explanations for its occurrence, techniques for identifying operant functions, and types of behavioral interventions [7]. However, their manuscript was accepted for publication in 2004 and, consequently, no studies would have been included that addressed the Horner et al. quality indicators. In addition, their review was descriptive and not conducted in a way come to be considered systematic. DiGennaro-Reed, Hirst, and Hyman conducted a 30-yeaer review of assessment and treatment of stereotypic behavior in children with autism and developmental disabilities from 1980 to 2010 [17]. However, they did not examine study quality nor calculate any effect sizes. A systematic review conducted by Lydon et al. [6] did assess study quality using the three stage protocol developed by Reichow [18] and calculated two types of effect sizes: percentage reduction from baseline (PRD) and percentage of zero data (PZD). However, their review focused only on response redirection for treating stereotypy and included adults up to 66 years of age . Finally Lydon et al. [5] assessed study quality and calculated PZD effect sizes but only included studies in which participants had autism, but not developmental or intellectual disabilities. Most notably, none of the reviews addressed, nor accounted for, publication bias which is an important component emphasized in the PRISMA document [19].

The purpose of the present meta-analysis was to extend the previous two reviews of Lydon and her colleagues in several ways. First, to adhere to all PRISMA guidelines for conducting a transparent systematic review [19]. Second, all techniques since 2005 were included in the current meta-analysis and not just limited to response redirection. Third, obtained studies were not limited to those that only had participants with autism. Fourth, three different effect size calculations were used: standard mean difference (SMD), improvement rate difference (IRD) and Tau. Campbell [20] pointed out that PZD is a rigorous effect size for characterizing the degree of total behavior suppression, but is inappropriate for summarizing treatment effects related to the reduction (versus elimination) of behavior. It would appear optimum for interventions to completely suppress all stereotypical behaviors, but unrealistic given their intractability. Further, these behaviors are only problematic when children are learning and performing academic skills and involved in social interactions. Finally, any attempts—or successes—at totally suppressing self-stimulatory behaviors needs to be accompanied with differential reinforcement of alternative (DRA) behaviors so that a functional alternative can be reinforced to avoid a “behavioral vacuum” which would otherwise provoke response covariation resulting in another inappropriate behavior from the same response class being displayed [21].

Method

A systematic search was performed to identify the extent research behavioral interventions to decrease stereotypy in children and adolescents. The search methods were consistent with the 12-item PRISMA statement for reporting meta-analyses [19]. The purpose was to ensure clarity and transparency of conducting systematic reviews.

Academic Search Premier was the search source with the following selected databases: ERIC, MedLINE, PsycARTICLES, and PsycINFO. The following Boolean terms/phrases were used: (“treatment of stereotypy”) OR (“treatment of stereotypical behaviors”) OR (“treatment of self-stimulation”) OR (“treatment of self-stimulatory behaviors”) AND (“children”) OR (“adolescents”) OR (“youth”) OR (“child”) OR (“teenagers”). In addition, ancestral searches were conducted of three journals that publish exclusively or primarily SCRD studies: Journal of Applied Behavior Analysis, Research in Autism Spectrum Disorders, and Research in Developmental Disabilities. Finally, references of three stereotypy reviews published subsequent to 2005 were searched [5,6,17].

Eligibility Criteria and Study Selection

Studies included were those only using SCRDs. Studies had to be in English and published in peer-reviewed journals between January 1, 2005 and December 30, 2018. The date of 2005 was selected because it was the year quality indicators for SCRD studies were developed and published [16]. In addition, Cook and Tankersley discussed the problems of trying to “retrofit” present-day quality indicators to studies published years or even decades ago [22]. Participants considered in the present review were children or adolescents from ages 5 to 18 in school/educational settings whose stereotypical behaviors required intervention. This age range was selected because it corresponds to children that would be in k – 12 schools. The reason for focusing only on school settings was to address the relevance of the interventions for educators. Only behavioral interventions were considered and studies conducted in non-school settings (e.g., home, clinic) were excluded.

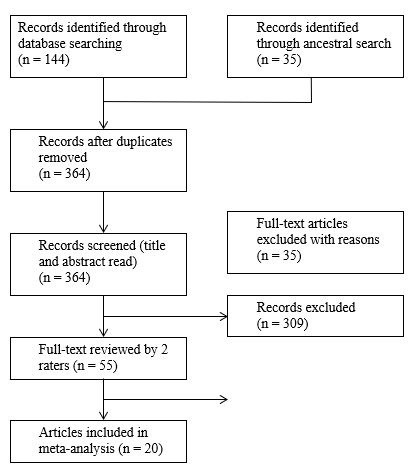

Studies were identified and retained at different stages based on PRISMA guidelines, and the results are displayed in (Figure 1). There were 163 total records identified that were articles no older than 2005 from peer-reviewed journals after duplicates were removed. Out of the 163 entries, 153 were retained to have their titles and abstract read. Of those, 55 were retained to read in their entirety (i.e., method sections for inclusion/exclusion criteria). Two graduate students were trained by the researcher how to read each of the 55 studies method sections. After engaging in the flow of information process, there were 15 articles retained for the current review. One graduate student read all 55 studies (i.e., method sections) while the other read 13 (30%) randomly selected studies and their interrater agreement was 100%. A total of 39 articles were removed from the 55 read because they were not conducted in school settings.

|

Figure 1: Search results using PRISMA guidelines |

Coding Procedures

Descriptive characteristics

The 15 articles retained from the search were coded along six variables: (a) participant age, gender, and diagnosis/educational label; (b) setting; (c) type of design; (d) dependent variables; (e) type of behavioral intervention; and (f) intervention agent. Two graduate assistants were trained by the experimenter to code the five variables. Four studies were randomly selected and the experimenter demonstrated the coding process on two through instructions and modeling. The two graduate assistants coded the remaining two studies with the experimenter providing performance feedback. The two graduate students then each coded the remaining studies independent of each other. Inter-rater reliability (IRR) was calculated for eight randomly selected studies (50%). This percentage was congruent with other published SCRD meta-analyses (e.g., [23-25]).

Methodological quality

Two graduate assistants appraised the quality of each article based on the Council for Exceptional Children’s (CEC) Standards for Evidence-Based Practices [26] that consisted of 22 component items across eight quality indicators (QIs) for SCRDs. The same training format used for coding descriptive characteristics was used for coding QIs. A binary score of one (met) or zero (not met) was used in the coding scheme. This method was previously used by Common, Lane, Pustejovsky, Johnson, and Johl [27]. A coding sheet with the 22 components across eight quality indicators was created in Excel©. The sheet consisted of three columns. The first column contained the quality indicator, the second column had the description, and the third column consisted of clarification developed by Common and his colleagues.

Statistical Analysis

Data extraction

Data were extracted from the graph(s) in each study using Enguage Digitizer [28]—an open source digitizing software package that converts graphic image files (e.g., .jpg, .bmp) into numerical data. Enguage is a free software package that is comparable to Biosoft’s Ungraph 5.0 that was recommended in the manual developed by Nagler, Rindskopf, and Shadish [29] for conducting SCRD meta-analyses and used in previous meta-analyses (e.g., [24]). In addition, all scores were converted into percentages setting the upper level and lower level of the y axis on all students to 100 and 0, respectively, before extraction. Losinski et al. recommend this approach to address the inherent subjectivity in which target variables were operationally defined (e.g., “aggression” versus “hitting and pushing”) [24].

Effect size calculations

Horner, Swaminathan, Sugai, and Smolkowski [30] noted that currently there is no consensus for the method for quantifying outcomes for use in SCRD meta-analysis, although some may be more robust or appropriate than others depending on data characteristics. Therefore, three types of effect sizes were calculated in the present meta-analysis. Standard mean difference (SMD) was calculated because it is the SCRD analog or variation of Cohen’s d statistic where the mean of the baseline phase is subtracted from the mean of the intervention phase and divided by the pooled standard deviation [31]. The similarity to Cohen’s d makes SMD an important statistic for comparison to non-single-case methods and also for statistically analyzing moderator variables and accounting for publication bias if there are at least three participants in a study to calculate between case SMD. However, SMD is considered by some unreliable because of small number of observations and floor effects limiting variability and results in overestimates of the parametric treatment effects [30, 32]. Losinski et al. [24] dealt with this problem by establishing a ceiling d at the 3rd quartile in the distribution of included SMD effect sizes with any number higher considered an outlier. This practice (i.e., 3rd quartile ceiling) was also used in the present study. Improvement rate difference (IRD) was also computed because it provides an effect size similar to the risk difference used in medical treatment research which has a proven track record in hundreds of studies [33]. Finally Tau-U values were computed because it controls for monotonic trend (i.e., increasing data during baseline). The IRD and Tau-U were calculated using the www.singlecaseresearch.org/calculators. For studies in which improvement was in the decreasing direction, the correction feature for Tau-U was not used (i.e., only Tau). Effect sizes for SMD were calculated by hand.

Additional analysis

Moderator variables were addressed by computing independent t-tests to compare differences in effectiveness of studies on type of dependent variable (i.e., vocal versus motor stereotypy) and age of participants. The rationale for type of dependent variable and age was because Losinski et al. [24] found significant differences in interventions based on contextual variables when examining dependent variable and age. Also, stereotypy may become more intractable as children get older. These t-tests were computed for all three effect size calculations: SMD, IRD, and Tau values for each of the moderator variables.

Publication Bias

Publication bias, or the “file drawer” effect refers to presence of potential bias existing because of a greater likelihood that published research shows positive findings [34]. In a meta-analysis of group design studies, the Meta-Win’s Fail-Safe function [35] can be used to estimate the number of studies with null results sufficient to reduce observed effect size to a minimal level (i.e., < .20). However, there is no comparable formula in SCRD meta-analyses. Therefore, to reduce the likelihood of the “file drawer” effect, the number of cases with no effect (i.e., 0) were added to the group of effect sizes to reduce the overall effect to ineffective or suspect levels (d<.20; IRD < .37; Tau <.20). This process results with the number of potentially “filed” studies (i.e., not submitted for publication for whatever reasons) needed to reduce effect sizes to insignificant levels (i.e., no observed effect).

Inter-Rater Reliability

nterrater reliability (IRR) data were conducted on seven randomly selected articles out of the 16 included studies for a total of 44% of studies on the six coded study characteristics and quality indicators. Interrater reliability was calculated both for study characteristics and quality indicator components by dividing the total number of agreements by the total number of agreements plus disagreements for each item and averaged for all items. Two graduate research assistants coded the articles for all variables and IRR for study characteristics was 87.9% (range: 71.4% -100%, SD = 10.719) and 91.5% (range 59% - 100%, SD = 12.039) for quality indicators.

Results

Results are presented in three section. The first section addresses descriptive features obtained from the studies including characteristics of participants and settings, design features, dependent variables, and intervention techniques. The second section presents the extent to which studies met each of the 22 component items pertaining to SCRD across CEC’s eight QIs. The final section contains effect size results.

Descriptive Features of Included Studies

Characteristics of participants

A total of 24 participants (21 male and 3 female) were included in the 15 studies. Four studies had six collective participants either younger than 5 or older than 18 [36-39] that were excluded from analyses. The mean age for male participants was 10.57 (range = 5 – 17, SD = 3.859). The three females were ages six, eight, and 10. Most of the participants were diagnosed with autism (n = 22). There was one female diagnosed with Rett Syndrome [40] and one male with a developmental disability and another one with an intellectual disability and seizure disorder [39]. In terms of ethnicity, four studies indicated participants were Caucasian [39,41-43], one Hispanic participant [39], and the remaining 10 studies did not report participants’ ethnicity.

Design features

A variety of SCRDs were used in the 15 studies. The most common was the reversal design [14,36,38,44,45]. Two studies used a reversal design embedded in an alternating treatments design [41,43] while another study also embedded a reversal design but within a multiple baseline design [46]. Two studies used an alternating treatments design by itself [39,42]. Two studies used a multi-element design [47,48] and another two studies used a changing conditions design [37,40]. Finally, one study used a combined reversal and changing criterion design [49].

Table 1: Dependent Variables, Intervention Type, and Intervention Agent |

Dependent variables

There were two main response classes that served as the dependent variables: verbal stereotypy and motor stereotypy. (Table 1) presents the dependent variables for the 16 studies reviewed. In general, the dependent variables were operationally defined quite specifically. For example, Meador et al. [40] defined hand wringing rubbing the pads on the fingers of one hand against the pads on the fingers of the other hand together, usually done by the shoulder. However, the dependent variable for one study was simply “vocalizations that were non-contextual or non-functional” [45]. All studies had multiple dependent variables because of the range of stereotypic behaviors except for Shillingsburg et al. [49] who only targeted “screaming.”

Interventions and intervention agent

(Table 1) also presents information on the type of intervention, interventionist, and whether social validity was assessed. The effectiveness of response interruption and redirection (RIRD) was examined in three studies [36,45,46]. This intervention involves interrupting the target behavior and redirecting the person to engage in a different response. For example, Cassella and her colleagues gave participants a one-step direction such as “touch head” contingent upon them engaging in vocal stereotypy. Giles et al. [14] compared the differential effectiveness of RIRD (e.g., “touch your knee” after episode of motor stereotypy) and response blocking (e.g., physically stopping the participant from performing stereotypy and saying “no”).

Four studies used antecedent, stimulus control-based interventions [41-43,48]. A typical technique was to make visual cue cards to teach participants when it was appropriate (green) and inappropriate (red) to engage in stereotypy. Langone and her colleagues used stimulus control by having the participant wear a tennis wrist band to cue him not to engage in stereotypy and response blocking in which a staff person would gently stop his movements when he attempted to touch his hands to his neck or shoulder.

Two studies specifically evaluated contingent and non-contingent reinforcement, differential reinforcement of other (DRO) behavior, and differential reinforcement of alternative (DRA) behavior [40,49] while one ne study investigated the use of self-recording and differential reinforcement for accurate recording [37]. Curiously, one study investigated the effectiveness of sensory integration (sensory diets and brushing with deep pressure) and concluded it was ineffective [38]. Studies examining this approach have numerous flaws and suspect outcomes (e.g., [50]).

The majority of the individuals delivering the treatment were the experimenter, graduate research assistant, or therapist (n = 9). However, six of the studies had educational personnel as the intervention agent. Four studies used the classroom teacher as the interventionist [39,44-46]. A student teacher implemented the intervention in one study [41] while a special education paraeducator administered the antecedent manipulation in another study [43]. Social validity was assessed by six of the studies. Two of these studies had a therapist conduct intervention [14,47] while another three studies used either teachers [45,46] or a student teacher [41]. Cassella et al. [36] indicated they assessed social validity but the interventionist was one of the experimenters.

Table 2: Quality Indicators Met by Study |

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Note: 1.1 Context/setting description; 2.1 Participant description, 2.2 Participant disability/at-risk status; 3.1 Role and description; 3.2 Training description; 4.1 Intervention procedures; 4.2 Materials description; 5.1 Implementation fidelity assessed/reported; 5.2 Fidelity dosage or exposure assessed/reported; 5.3; Fidelity assessed across relevant elements/throughout study; 6.1 Independent variable (IV) systematically manipulated; 6.2 Baseline description; 6.3 No or limited access to IV during baseline; 6.4 Design provides at least demonstrations of experimental effect at three different times; 6.5 Baseline phase contains at least three data points; 6.6 Design controls for common threats to internal validity; 7.1 Socially important goals; 7.2 Description of dependent variable measures; 7.3 Reports effects on the intervention on all measures; 7.4 Minimum of three data points per phase; 7.5 Adequate interobserver agreement; 8.1 Study provide single-case graph clearly representing outcome data across all study phases.

Methodological Quality Indicators

CECs Standards for Evidence-Based Practices (2014) that consisted of 22 component items across eight quality indicators (QIs) for SCRDs were used to determine methodological quality of reviewed studies. None of the 15 studies met all 22 items, although seven met 21 [14,36,39,43,45,46,49]. The lowest score (13) was obtained for the second experiment in the Rapp et al. [48] study Overall, quality of the 15 studies was relatively high (mean=19.06, range 14 – 21, SD = 2.374). The lowest score (8 [50%]) was for item 3.2 which required studies to describe one demographic characteristic of the interventionist (e.g., race, ethnicity, educational background, or licensure). The three items that make up implementation fidelity were also fairly low (mean = 9.67)

aonly one participant in the study but with sufficient data to calculate the mean and standard deviation bonly one participant’s data was used, but the study had more than one participant but with unusable data to calculate effect sizes. |

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

There were nine items that were met by all 15 studies: 2.2 Participant disability or risk status, 4.1 Detailed intervention procedures, 6.2 Description of baseline conditions, 6.3 Participants had no or limited access to the intervention, 6.4 Three demonstrations of experimental effects, 6.5 Baseline phases include three data points, 7.1 Outcomes are socially important, 7.2 Clear definition of dependent measures, and 7.3 Study reports results on all targeted variables. (Table 2) displays the results for each component across QIs for all 15 studies. The grey shaded cell are those that received a 0 (N = 41 [9%]). Taken together, 11 out of 15 studies (73%) met 80% or more of the QIs (M=92%, range = 82% - 95%, SD = 4.854).

Statistical Analysis

Effects of studies

Effect sizes were calculated for the first AB contrast for each participant of all included studies. Several studies used a multiple baseline design across three behaviors so those participants would have three AB contrasts. Effect sizes were then averaged for each study and appear in (Table 3). Overall omnibus effect sizes for each type were as follows: SMD (range = -5.43 – 3.026 , mean = 1.12, SD = 1.78), IRD (range = 0 – 1; mean = .70, SD =0.310), and Tau (range = -1 – 1.15, mean = .37 , SD = 0.650).

Results of independent samples t-tests with equal variances not assumed displayed were calculated between studies which targeted vocal stereotypy versus motor stereotypy. Results were obtained between study effect sizes for IRD (t = 0.231, p = .408), Tau-U (t = -2.80, p = .007), and SMD (t = 2.53, p = .016). Consequently, interventions for verbal stereotypy were more effective than those for motor stereotypy on SMD and Tau-U effect sizes but not for IRD effect sizes. Participants were divided into young and old groups based on their median age: young (6 – 10) and old (11 – 1 6). Results were also obtained between study effect sizes IRD (t = 1.31, p = .09), Tau-U (t = -2.09, p = .04), and SMD (t = 1.36, p = .09). The only test that was significant was for Tau-U indicating interventions were more effective for younger participants. However, given the insignificant findings for the other two effect sizes, these results are equivocal at best.

Publication bias

To address the “file drawer effect,” the number of studies with results of zero required to reduce the overall effect to insignificant or suspect levels was determined for IRD and Tau effect sizes. SMD data were not used because of the ceiling was set at the 3rd quartile which was 3.026. It would take 44 cases to bring the overall IRD into the ineffective range (< .37) and 110 cases to bring the overall Tau score into the ineffective rage (Tau <.20). SCRD studies typically have between one to six participants with the average number of participants being three. It would take 15 studies with three participants for IRD and 37 studies with three participants for Tau that would have either been “filed” and not submitted for publication or represent “grey” literature. It would be highly unlikely that between 2005 to the present that 15 to 37 SCRD studies would exist that would otherwise bring the present effect sizes results to the ineffective range.

Discussion

The purpose of the present meta-analysis was to extend the previous two reviews of Lydon and her colleagues in several ways [5,6]. First, to adhere to all PRISMA guidelines for conducting a transparent systematic review [19]. Second, all techniques since 2005 were included in the current meta-analysis and not just limited to response redirection. Third, obtained studies were not limited to those that only had participants with autism. In this section, characteristics of the studies, effectiveness of interventions to decrease stereotypy, and quality of the studies will be addressed along with limitations.

Characteristics of the Studies

Although this meta-analysis was not limited to children and adolescents with autism, the majority of them had been diagnosed with this disorder. This finding should not come as a surprise between 85% and 99% of individuals with autism engage in either, or both, motor and vocal stereotypy (e.g., [51,52]). Further, most of the participants were males. A recent study found that 3.6% of males had ASD compared to only 1.25% of females [53]. These prevalence estimates are similar to those in Great Britain [54], although results are somewhat dated.

One of the inclusion criteria for the present review was that studies needed to be conducted in schools. One of the reasons for this eligibility criterion was because according to the U.S. Department of Education [55], autism is the fourth highest category of number of students being served under the Individuals with Disabilities Education Act. A related disappointing finding was that the majority of individuals delivering intervention were the experimenter, graduate research assistant, or therapist, with only six of studies employing educational personnel. Educational personnel are quite able to describe the types of challenges they face teaching students with autism but often lack the necessary training (e.g., Canonica, Eckert, Ullrich, & Markowetz, 2018) [56]. However, given the high prevalence of stereotypy in this population, and the importance of suppressing these motor and vocal behaviors because of the negative impact they have on acquiring academic and social skills [5], it would behoove schools to provide educational personnel with training necessary to address them. There are several unique approaches to provide educators with such training. For example, Saadatzi, Pennington, Welch, and Graham (2018) developed a tutoring system within a small-group context by combining technology and social robotics that featured a virtual and humanoid robot simulating a student.

Quality of Studies

There were two previous systematic reviews that assessed study quality. Lydon et al. [6] only focused on response redirection and included adults where as another review conducted by Lydon et al. [5] only focused on participants with autism. In the present review, none of the studies met all 22 quality indicator items. However, over approximately half the studies met 21 of the indicators. Four of those studies used some version of response interruption and redirection [14,45,46]. Two additional studies focused on some types of antecedent manipulations [43, 39] and one used a token economy and response cost [49]. Kratochwill et al. [31] proposed a 5-3-20 threshold to determine whether an intervention was evidence-based: a minimum of five studies that either meets standards or meets them with reservations for design quality and treatment effects, studies were conducted by at least three research teams with no overlapping authorship at three different institutions, and a total of at least 20 combined participants. Based on the results of the current review, it does not appear any of the interventions in studies that met 21 or the 22 CEC quality indicators could definitively be considered evidence-based at this time.

The most frequently unmet indicator was 3.2 which describes the specific amount of training required and professional credentials of the interventionists. The absence of this indicator is not surprising since the majority of individuals who delivered intervention were either the experimenter, therapist, or research assistant. Relatedly only six studies assessed social validity (Brusa & Richman [41]; Cassella et al., [36]; Giles et al., [12]; Liu-Gitz & Banda [45]; Potter et al., [47]; Sloman et al., [46]). Social validity is an important variable to assess because teachers are unlikely to use interventions that require extensive training, expertise, are overly complicated, and time-consuming [24].

Six of the studies failed to assess and report one or more items of quality indicator five: implementation fidelity. It remains a challenge to sufficiently address in many areas of education (e.g., Kirk et al.) and refers to the degree to which an intervention follows the original concept and steps of the inventors (Dusenbury et al.). Fidelity should also address how much of the original intervention has been implemented, how well different components have been applied, when possible the opinions of participants regarding the effects of the program, and the way essential steps differentiate itself from other similar interventions.

The last item with which six studies omitted was item 7.4 which requires a minimum of three data points per phase. The primary way SCRD studies are analyzed is through visual inspection of the data. Data are scrutinized to identify changes in level, trend, and variability. Engaging in this process for all phases requires at least three data points, although five is preferable otherwise it is impossible to determine with any degree of certainty the effectiveness of any intervention. Further, effect sizes for SCRD studies, especially using non-overlap techniques, can result in overinflated values [33].

Effectiveness of Interventions

There were too many different types of interventions used to compare their differential effectiveness. The two most common types were RIRD ([36, 45, 46]), and antecedent, stimulus control-based techniques ([41-43,48]). Overall, effect sizes indicated the effectiveness of the interventions was small to moderate. These results were similar to those obtained by Lydon et al. [5] who had PZD effect sizes in the low to fair effectiveness range while Lydon et al. [6] obtained PZD mostly in the ineffective range with only two of questionable effectiveness, and one with high effectiveness. Apparently, both vocal and motor stereotypy are quite intractable regardless of the intervention. One of the Lydon et al reviews focused on inhibitory stimulus control interventions while the other targeted RIRD. It is possible that the difficulty obtaining consistently effective responses may be due to the high level of sensory reinforcement stereotypy provides the individual or a lack of conducting a functional behavioral assessment as a way to select an intervention that addresses the identified function (e.g., Cunningham et al.).

Conclusion

The purpose of the current meta-analytic systematic review was to extend the findings from several previous reviews. A limitation of all the reviews were than none of them addressed all 12 PRISMA guidelines, especially accounting for publication bias. The current review was the only that focused solely on studies conducted in only school settings. This variable is important to address in terms of implications for educators. Children spend more time at school than any other setting outside of the home and their behaviors are subject to ongoing scrutiny in the classroom. A discouraging finding in the present meta-analysis was that only six studies used school personnel as interventionists. Other than experimenter or graduate assistant, therapists were the most common person to deliver treatment. Given the nature of stereotypy and that is displayed most by individuals with autism, the type of therapist most likely to deliver treatment be one that was Board Certified Behavior Analyst (BCBA; Deochand, & Fuqua, 2016). However, schools have been slow to recognize BCBA therapists as a potentially valuable resource for children and adolescents with autism.

A question that still remains is whether behavioral treatments for stereotypy for children and adolescents is evidence based. Although none of the 15 studies meet all 22 CEC quality indicators, approximately half met 21 out of the 22. The most common intervention was RIRD ([36,12,45,46]) followed by different forms of antecedent manipulations ([43,48]) and one using a token economy and response cost [49]. However, none of those reviewed met the 5-3-20 threshold [31]. However, an important limitation in the current review was that no studies were included prior to 2005 and it is very possible that including the corpus of studies may have met that threshold for several of the interventions such as RIRD and various forms of antecedent manipulations being evidence-based.

Another limitation was the decision to use the absolute coding approach for assessing the 22-item quality indicators (0=not met, 1=met) versus the weighted method that gives partial credit (0=not met, .5=partially met, 1=met). The reason for using the absolute system was to provide the most conservative estimate of study quality considering that studies investigating interventions for suppressing stereotypy have been conducted for over 35 years (e.g., Woods, 1983). Nevertheless, it does represent a limitation because giving studies partial credit helps answer the question as to whether a certain intervention for a particular outcome is effective and thereby avoiding Type II (false negative) errors [27]. Relatedly, a weighted coding scheme would be helpful in evaluating research conducted before quality indicators were published.

References

- Lewis MH, Baumeister AA (1982) Stereotyped mannerisms in mentally retarded persons: Animal models and theoretical analyses. In: Ellis NR (ed.) International review of research in mental retardation. New York, NY: Academic Press Pg.no: 123-161.

- Newell KM, Incledon T, Bodfish JW, Sprague RL (1999) Variability of stereotypic body-rocking in adults with mental retardation. Am J Ment Retard 104: 279-288.

- Iwata BA, Dorsey MF, Slifer KJ, Bauman KE, Richman GS (1982) Toward a functional analysis of self-injury. J Appl Behav Anal 27: 197-209.

- Hanley G P, Iwata BA, McCord BE (2003) Functional analysis of problem behavior: A review. J Appl Behav Anal 36: 147-185.

- Lydon S, Moran L, Healy O, Mulhern T, Enright-Young K (2017) A systematic review and evaluation of inhibitory stimulus control procedures as a treatment for stereotyped behavior among individuals with autism. Dev Neurorehabil 20: 491-501.

- Lydon S, Healy O, O’Reilly M, McCoy A (2013) A systematic review and evaluation of response redirection as a treatment for challenging behavior individuals with developmental disabilities. Res Dev Disabil 34: 3148-3158.

- Rapp JT, Vollmer TR (2005) Stereotypy I: A review of behavioral assessment and treatment. Res Dev Disabil 26: 527-547.

- Maag JW, Parks BT, Rutherford RB Jr (1984) Assessment and treatment of self-stimulation in severely behaviorally disordered children. Monograph in Behavioral Disorders 7: 27-39.

- Rincover A (1978) Sensory extinction: A procedure for eliminating self-stimulatory behavior in psychotic children. J Abnorm Child Psychol 6: 299-330.

- Hagopian LP, Toole LM (2009) Effects of response blocking and competing stimuli on stereotypic behavior. Behav Intervent 24: 117-125.

- Hagopian LP, Adelinis JD (2001) Response blocking with and without redirection for the treatment of pica. J Appl Behav Anal 34: 527-530.

- Lerman DC, Kelley ME, Vorndran CM, Van Camp CM (2003) Collateral effects of response blocking during the treatment of stereotypic behavior. J Appl Behav Anal 36: 119-123.

- Rapp JT, Dozier CL, Carr JE (2001) Functional assessment and treatment of pica: A single-case experiment. Behav Intervent 16: 111-125.

- Giles AF, St Peter CC, Pence ST, Gibson AB (2012) Preference for blocking or response redirection during stereotypy treatment. Res Dev Disabil 33: 1691-1700.

- Ahearn WH, Clark KM, MacDonald RP, Chung BI (2007) Assessing and treating vocal stereotypy in children with autism. J Appl Behav Anal 40: 263-275.

- Horner RH, Carr EG, Halle J, McGee G, Odom S, Wolery M (2005) The use of single-subject research to identify evidence-based practice in special education. Except Child 71: 165-179.

- DiGennaro-Reed FD, Hirst JH, Hyman SR (2012) Assessment and treatment of stereotypic behavior n children with autism and other developmental disabilities: A thirty year review. Res Autism Spectr Disord 6: 422-430.

- Reichow B (2011) Development, procedures, and application of the evaluative method for determining evidence-based practices in autism. In: Reichow B, Doehring P, Cicchetti DV, Volkmar FR (eds.). Evidence-based practices and treatments for children with autism. New York, NY: Springer. Pg no: 25-39.

- Liberati A, Altman DG, Tetzlaff J, Mulrow C, Gotzsche PC, et al. (2009) The PRISMA statement for reporting systematic reviews and meta-analyses of studies that evaluate health care interventions: Explanation and elaboration. PLoS Med 6:

- Campbell JM (2004) Statistical comparison of four effect sizes for single-subject design. Behav Modif 28: 234-246.

- Maag JW (2018) Behavior management: From theoretical implications to practical applications (3rd ed.). Boston, MA: Cengage Learning.

- Cook BG, Tankersley M (2007) A preliminary examination to identify the presence of quality indicators in experimental research in special education. In: Crockett J, Gerber MM, Landrum TJ (eds.). Achieving the radical reform of special education: Essays in honor of James M. Kauffman. Mahwah, NJ: Lawrence Erlbaum. Pg no: 189-212.

- Gage NA, Lewis TJ, Stichter JP (2012) Functional behavioral assessment-based interventions for students with or at-risk for emotional and/or behavioral disorders in school: A hierarchical linear modeling meta-analysis. Behav Disord 37: 55-78.

- Losinski M, Maag JW, Katsiyannis A, Parks-Ennis R (2014) Examining the effects and quality of interventions based on the assessment of contextual variables: A meta-analysis. Except Child. 80: 407-422.

- Maggin DM, Briesch AM, Chafouleas SM (2013) An application of the What Works Clearinghouse standards for evaluating single-subject research: Synthesis of the self-management literature base. Remedial Spec Educ 34: 44-58.

- Council for Exceptional Children (2014) Council for Exceptional Children standards for evidence-based practices in special education. Except Child 80: 504-511.

- Common EA, Lane KL, Pustejovsky JE, Johnson AH, Johl LE (2017) Functional assessment-based interventions for students with or at-risk for high incidence disabilities: Field testing single-case synthesis methods. Remedial Spec Educ 38: 331-352.

- Mitchell M (2002) Engauge Digitizer (version 4.1) [computer software].

- Nagler EM, Rindskopf DM, Shadish WR (2008) Analyzing data from small N designs using multilevel models: A procedural handbook. Washington, DC: U. S. Department of Education.

- Horner RH, Swaminathan H, Sugai G, Smolkowski K (2012) Considerations for the systematic analysis and use of single-case research. Educ Treat Children 35: 269-290.

- Busk PL, Serlin RC (1992) Meta-analysis for single-case research. In: Kratochwill TR, Levin JR (eds.). Single-case research designs and analysis: New directions for psychology and education. Hillsdale, NJ: Lawrence Erlbaum Pg no: 187-212.

- Scruggs TE, Mastropieri MA (2012) PND at 25: Past, present, and future trends in summarizing single-subject research. Remedial Spec Educ 34: 9-19.

- Parker RI, Vannest KJ, Brown L (2009) The improvement rate difference for single-case research. Except Child 75: 135-150.

- Rosenthal R (1979) The “file drawer problem” and tolerance for null results. Psychol Bull 86: 638-641.

- Rosenberg MS, Adams DC, Gurevitch J (2000) Metawin. Sunderland, MA: Sinauer.

- Cassella MD, Sidener TM, Sidener DW, Progar PR (2011) Response interruption and redirection for vocal stereotypy in children with autism: A systematic replication. J Appl Behav Anal 44: 169-173.

- Fritz JN, Iwata BA, Rolider NU, Camp EM, Neidert PL (2012) Analysis of self-recording in self-management interventions for stereotypy. J Appl Behav Anal 45: 55-68.

- Moore KM, Cividini-Motta C, Clark KM, Aheam WH (2015) Sensory integration as a treatment for automatically maintained stereotypy. Behav Intervent 30: 95-111.

- Rispoli M, Camargo SH, Neely L, Gerow S, Lang R, et al. (2014) Pre-Session satiation as a treatment for stereotypy during group activities. Behav Modif 38: 392-411.

- Meador SK, Derby KM, Mclaughlin TF, Barretto A, Weber K (2007) Using response latency within a preference assessment. Behav Anal Today 8: 63-69.

- Brusa E, Richman D (2008) Developing stimulus control for occurrences of stereotypy exhibited by a child with autism. Int J Behav Consult Ther. 4: 264-269.

- Conroy MA, Asmus JM, Sellers JA, Ladwig CN (2005) The use of an antecedent-based intervention to decrease stereotypic behavior in a general education classroom: a case study. Focus Autism Other Dev Disabil 20: 223-230.

- Haley JL, Heick PF, Luiselli JK (2010) Use of an antecedent intervention to decrease vocal stereotypy of a student with autism in the general education classroom. Child Fam Behav Ther 32: 311-321.

- Langone SR, Luiselli JK, Hamill J (2013) Effects of response blocking and programmed stimulus control on motor stereotypy: A pilot study. Child Fam Behav Ther 35: 249-255.

- Liu-Gitz L, Banda DR (2010) A replication of the RIRD strategy to decrease vocal stereotypy in a student with autism. Behav Intervent 25: 77-87.

- Sloman KN, Schulman RK, Torres-Viso M, Edelstein ML (2017) Evaluation of response interruption and redirection during school and community activities. Behav Anal: Res Practice 17: 266-273.

- Potter JN, Hanley GP, Augustine MC, Casey J, Phelps M (2013) Treating stereotypy in adolescents diagnosed with autism by refining the tactic of “using stereotypy as reinforcement”. J Appl Behav Anal 46: 407-423.

- Rapp JT, Patel MR, Ghezzi PM, O’Flaherty CH, Titterington CJ (2009) Establishing stimulus control of vocal stereotypy displayed by young children with autism. Behav Intervent 24: 85-105.

- Shillingsburg MA, Lomas JE, Bradley D. (2012). Treatment of vocal stereotypy in an analogue and classroom setting. Behav Intervent 27: 151-163.

- Leong HM, Carter M, Stephenson JR (2015) Meta-analysis of research on sensory integration therapy for individuals with developmental and learning disabilities. J Dev Phys Disabil 27: 183-206.

- Campbell M, Locascio JJ, Choroco MC, Spencer EK, Malone RP, et al. (1990) Stereotypies and tardive dyskinesia: Abnormal movements in autistic children. Psychopharmacol Bull 26: 260-266.

- Mayes SD, Calhoun SL (2011) Impact of IQ, age, SES, gender, and race on autistic symptoms. Res Autism Spectr Disord 5: 749-757.

- Xu G, Strathearn L, Liu B, Bao W (2018) Prevalence of autism spectrum disorder among US children and adolescents: 2014-2016. JAMA 419: 81-82.

- Merrick J, Kandel I, Morad M (2004) Trends in autism. Int J Adolesc Med Health 16: 75-78.

- U.S. Department of Education, Institute for Education Sciences, National Center for Education Statistics (2018) The condition of education: Children and youth with disabilities.

- Canonica C, Eckert A, Ullrich K, Markowetz R (2018) Teachers’ perspectives on challenges in everyday school life with pupils with autism spectrum disorder. Quarterly Journal of Special Education and Related Disciplines 87: 232-247.

LOGIN

LOGIN REGISTER

REGISTER.png)